Opening the Black Box of GPT-2

A series of Google Colab Notebooks designed to introduce students fundamental concepts in Large Language Models by unboxing the various layers of Generative Pre-trained Models (GPTs).

ChatGPT was built on top of previous open-source large language models such as GPT-2. When GPT-2 was first announced in 2019, it was one of the largest publicly available models. With 1.5 billion parameters, GPT-2 demonstrated that transformer architectures with appropriate training held significant advancements in language understanding and text generation over previous approaches to Natural Language Processing (NLP). Compared to the contemporary state-of-the-art models such as GPT-5.2 and Claude Opus 4.5, GPT-2 is significantly smaller and less capable; however, it offers a glimpse into how current AI models were developed.

Current advanced large language models are now proprietary, making studying how they work difficult. The purpose of these Colab notebooks is to introduce students to the fundamentals of transformer architectures using GPT-2, as GPT-2 is one of the first open-weight Large Language Models (LLMs) and because represented a significant step in the historical development of AI.

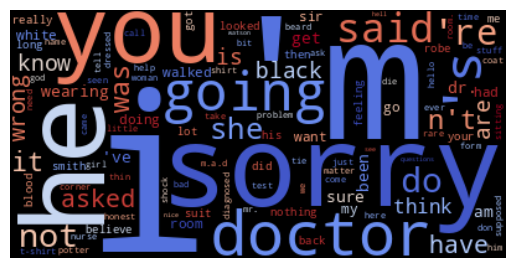

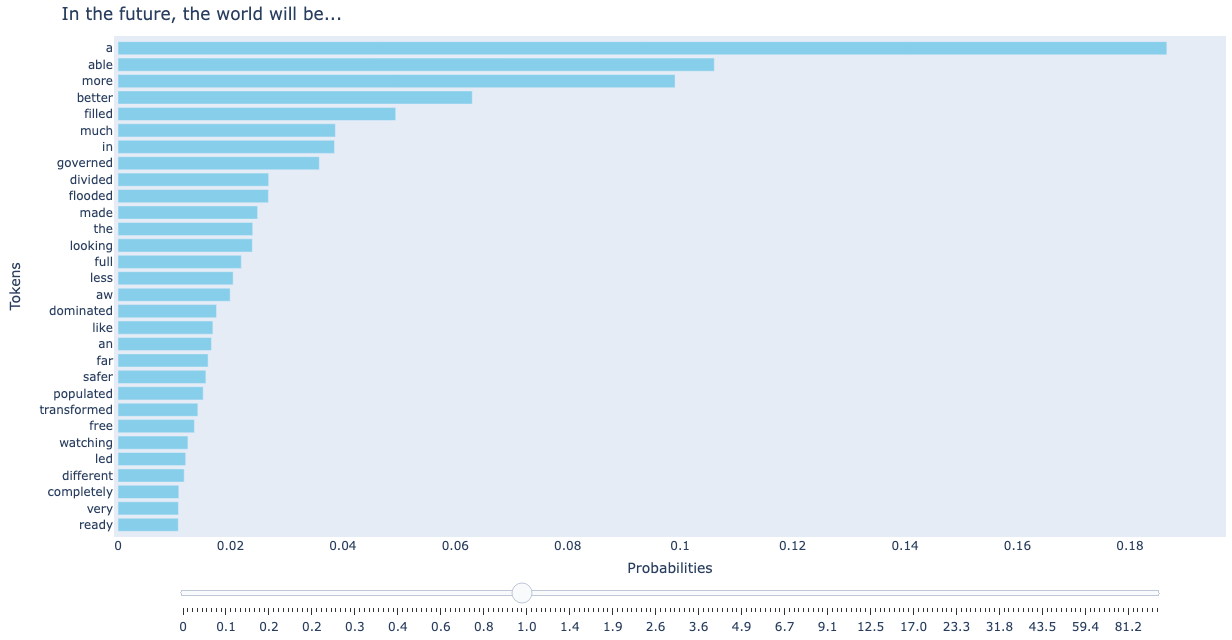

- This notebook is designed to give you a hands on feel for fundamental issues pertaining to Generative Pre-trained Transformers. (GPT). Specifically, the notebook introduces concepts such as temperature, logits and bias in Large Language Models (LLMs)

- This notebook explains how to fine tune Large Language Models.

- This notebook covers how to extend the functionality of language models with RAG.

Example of module covering bias in GPT-2: